Tech Brief #11 | OpenAI verfehlt 2025er Ziele und schafft Sprung in Bild-Modellen

Während OpenAI laut internen Berichten seine Wachstums- und Umsatzziele für 2025 verfehlt und Investoren auf dem Sekundärmarkt zunehmend skeptischer werden, liefert das Unternehmen mit „Images 2“ fast unbemerkt einen massiven Produktivitätssprung in der Bildgenerierung. Eine Analyse der Diskrepanz zwischen verblasstem Consumer-Hype und dem echten, oft unterschätzten B2B-Mehrwert von generativer KI.

I) OpenAI verfehlt Umsatz- und Nutzerziele für 2025

OpenAI hat interne Zielvorgaben für 2025 verfehlt, darunter das Erreichen von einer Milliarde wöchentlich aktiven Nutzern sowie die Umsatzziele für ChatGPT. Infolgedessen tun sich Investoren auf dem Sekundärmarkt zunehmend schwer, ihre Anteile auf Basis der aktuellen 850-Milliarden-USD-Bewertung zu verkaufen. Um Churn zu verhindern, verteilt OpenAI Berichten zufolge sogar 100%-Rabatte an zahlende Plus-Nutzer. Quelle: The Information

Strategische Einordnung: Das könnte auch bedeuten, dass OpenAI den Boden der öffentlichen Wahrnehmung erreicht hat. Zuletzt war Anthropic in den Nachrichten extrem präsent und hat OpenAI in viele Belangen abhängt. In einer Hinsicht jedoch ist OpenAI weiter vorn: der verfügbaren Rechenkapazität. Das könnte durchaus zu einer Trendwende führen, da bereits jetzt viele Entwickler von Claude zu Codex gewechselt sind, weil sie von Anthropic’s Drosselung der Modell-Leistungsfähigkeit enttäuscht waren.

II) „Images 2“ ist ein enormer Sprung bei Bild-Modellen

Fast zeitgleich und ohne große mediale Begleitmusik hat OpenAI das neue Bildgenerierungsmodell „Images 2“ veröffentlicht. Das Modell verbindet visuelle Kreation erstmals mit tiefem logischem Reasoning. Es recherchiert den Kontext vor der Generierung selbstständig und minimiert historische Schwächen wie Fehler bei der Darstellung von Texten und Schriften drastisch. Quelle: The Decoder

Strategische Einordnung: Während die Debatte um Superintelligenz (AGI) stagniert, werden operative Prozesse im Hintergrund gerade komplett automatisiert. Die Fähigkeit, verlässliche visuelle Assets wie Präsentations-Grafiken oder Storyboards in Sekundenbruchteilen zu generieren, ändert die Arbeit von Agenturen und Marketingabteilungen grundlegend.

Tech Brief #10 | KI-Agenten als Distributions-Plattform, Automatisierungs-Potentiale im Kundenservice und Deepfake-Abwehr

Die rasante Entwicklung generativer KI erfordert strukturelle Anpassungen in Commerce, Kundenservice und IT-Sicherheit. Während Mondelez seine digitale Infrastruktur gezielt für KI-Crawler öffnet, führt Zoom biometrische Iris-Scans ein, um Deepfake-Bewerber in Remote-Interviews zu stoppen. Parallel zeigt der späte Aufbau eines menschlichen Supports bei Trade Republic, welch hohes Automatisierungs-Potenzial im Kundenservice tatsächlich steckt.

I) Mondelez krempelt Digital-Strategie für KI-Agenten um

Der Lebensmittelgigant baut seine digitale Commerce-Strategie radikal um, damit die eigenen Produkte von KI-Agenten gefunden werden. Zuvor hatte man KI-Crawler aus IP-Schutzgründen blockiert – mit dem Ergebnis, dass Kernmarken wie Oreo in nur 10% der relevanten KI-Chats auftauchten. Nach dem Entsperren der Crawler und einer tiefgreifenden technischen Optimierung der Websites für maschinelle Lesbarkeit stieg die Präsenz von Oreo in den KI-Outputs auf rund 70%. Quelle: Digiday

Strategische Einordnung: GenAI-Chatbots sind eine neue Distributionsplattform – vergleichbar mit dem historischen Aufstieg des Internets oder des Smartphones. Wenn KI in Zukunft Kaufentscheidungen für Konsumenten vorfiltert oder direkt trifft, müssen Marken an ihrer Sichtbarkeit für die Agenten arbeiten und die neuen Customer Journeys aktiv mitgestalten.

II) Trade Republic stellt 1.000 Kundenservice-Mitarbeiter ein

Der Neobroker Trade Republic, der für 8 Millionen Kunden über 100 Milliarden Euro an Assets verwaltet, stellt 1.000 menschliche Service-Mitarbeiter ein. Nach massiver Kritik und Beschwerden von Verbraucherschützern verabschiedet sich das Unternehmen damit von seinem bisherigen, fast ausschließlich auf Chatbots und Automatisierung basierenden Kundenservice-Ansatz und bietet nun 24/7-Support per Telefon und Live-Chat an. Quelle: Manager Magazin

Strategische Einordnung: Die oberflächliche Lesart ist, dass der reine Tech-Support hier gescheitert sei. Meine Perspektive ist stattdessen: Ein Unternehmen hat es geschafft, in einer stark regulierten Branche über Jahre hinweg fast komplett ohne regulären, menschlichen Kundenservice zu skalieren. Das zeigt, dass die meisten Unternehmen ihr Automatisierungspotenzial im Service aktuell kaum ausschöpfen.

III) Zoom kooperiert mit „Worldcoin“ zur Identifikation von Menschen

Zoom integriert die Biometrie-Technologie „World ID“ (des von Sam Altman mitgegründeten Unternehmens World), um Teilnehmer in Videokonferenzen als echte Menschen zu verifizieren. Die neue Funktion „Deep Face“ gleicht den Live-Video-Feed der Nutzer mit einem zuvor erstellten, kryptografischen Iris-Scan ab. Ziel ist es, zu verhindern, dass KI-generierte Deepfakes oder Avatare unbemerkt an sensiblen Business-Meetings teilnehmen. Quelle: Axios

Strategische Einordnung: Im Hiring für Remote-Jobs, insbesondere von Software-Entwicklern, ist höchste Vorsicht geboten. Es häufen sich Vorfälle, in denen sich Bewerber durch KI-Avatare oder externe „Proxys“ in Video-Interviews vertreten lassen, um sich lukrative Positionen oder Zugang zu internen Repositories zu erschleichen.

Tech Brief #9 | Bain-Hack und Agent-Plattform

Während NVIDIA mit einer neuen offenen Plattform die Ära der autonomen KI-Agenten im Enterprise-Sektor standardisiert, kollabiert bei den Tier-1-Beratungen die Sicherheitsarchitektur. Nach McKinsey wurde nun das interne KI-Tool von Bain in nur 18 Minuten gehackt.

I) Der nächste Beratungs-Hack: Bain

Nach dem Daten-Leak bei McKinseys „Lilli“ hat das Cybersecurity-Startup CodeWall nun auch das interne KI-Tool der Strategieberatung Bain gehackt – in exakt 18 Minuten. Mit den erbeuteten Token hätte sich ein Angreifer theoretisch als jeder beliebige Bain-Mitarbeiter im System ausgeben können. Quelle: Manager Magazin

Strategische Einordnung: Damit offenbart sich ein systemisches Sicherheitsproblem bei den großen Management-Beratungen, die derzeit versuchen, eigene KI-Lösungen für sich und ihre Klienten auszurollen. Absolute Vertraulichkeit ist das wichtigste Asset im Beratungsgeschäft und dazu gehören sehr viel umfassendere Sicherheitsvorkehrungen.

II) NVIDIA „Open Agent Development Platform Toolkit“

NVIDIA hat einen Werkzeugkasten veröffentlicht, um die Entwicklung autonomer KI-Agenten für Wissensarbeiter branchenübergreifend zu standardisieren. Große Software-Unternehmen wie SAP, Salesforce und Atlassian integrieren dieses Toolkit bereits, um ihre eigenen Agenten-Netzwerke in der Breite massentauglich zu machen. Quelle: NVIDIA Blog

Strategische Einordnung: NVIDIA hatte vor einem Monat bereits NemoClaw als sichere Alternative zum extrem erfolgreichen OpenClaw veröffentlicht. Diese Initiative zeigt, wie zentral Agenten für das weitere Wachstum mit KI sind. Es wird spannend zu sehen, wie schnell SAP & Co. nun tatsächlich nützliche eigenen Lösungen auf dieser Basis veröffentlichen.

Tech Brief #8 | Exponentielles KI-Wachstum, Micro-Ransomware und Souveränität

Anthropics Umsatz explodiert auf 30 Mrd. USD und beweist den echten B2B-Wert von KI jenseits von Pilotprojekten. Gleichzeitig zeigt Anthropics neues Hacking-Modell „Mythos“, dass sinkende Cyber-Angriffskosten völlig neue Märkte für den Schutz vor Micro-Ransomware eröffnen. Abseits des KI-Hypes markiert Frankreichs Wechsel von Microsoft zu Linux zudem einen Wendepunkt: Digitale Souveränität wird zum harten Kaufkriterium in Europa. Eine strategische Einordnung.

I) Anthropic „Mythos“: KI als Cyber-Waffe und neue Produktchancen

Anthropic hat mit „Mythos“ ein spezialisiertes Cybersecurity-Modell angekündigt, das in der Lage ist, Schwachstellen in bestehender Software zu identifizieren. Das Modell ist so leistungsfähig (und dadurch auch gefährlich), dass der breite Release vorerst gestoppt wurde, um mit ausgewählten Unternehmen und Stakeholdern kritische Software vorab zu patchen. Quelle: The Verge

Strategische Einordnung: Die Grenzkosten für Cyberangriffe sinken durch solche autonomen Modelle gegen null. Neben den offensichtlichen Risiken gilt es hier, die massiven neuen Produktchancen im Auge zu behalten – z.B. im Cybersecurity-Markt für Privatpersonen und kleine Firmen. Wenn automatisierte Angriffe fast nichts mehr kosten, lohnen sich plötzlich auch Ransomware-Attacken, bei denen nur wenige Hundert oder Tausend Euro Lösegeld für private Fotos erpresst werden. Analog zum Enterprise-Markt entsteht hier ein enormer Bedarf an skalierbaren B2C-Produkten, die aktive Abwehrmaßnahmen und Cyber-Versicherungen nahtlos kombinieren.

II) Anthropic knackt 30-Milliarden-Marke

Anthropics annualisierte Umsatz-Run-Rate (ARR) hat sich im ersten Quartal 2026 verdreifacht: von knapp 9 Mrd. USD Ende 2025 auf nun über 30 Mrd. USD. Dieser massive Anstieg wird primär durch tiefe B2B-Integrationen und Software-Automatisierung (Claude Code) bei Großkunden getrieben. Quelle: Financial Times

Strategische Einordnung: Dieses exponentielle Wachstum auf mittlerweile substantieller Basis ist ein weiterer Beleg für die Enterprise-Reife von generativer KI. Die Technologie wird nicht mehr für isolierte Experimente oder Chatbot-Spielereien eingekauft, sondern für wertstiftende Anwendungen im Unternehmenskontext.

III) Frankreich migriert von Microsoft zu Linux

Die französische Verwaltung forciert den Ausstieg aus dem Microsoft-Ökosystem und migriert strategisch relevante Teile ihrer öffentlichen IT-Infrastruktur auf Linux und europäische Open-Source-Lösungen. Quelle: TechCrunch

Strategische Einordnung: Digitale Souveränität ist längst nicht mehr nur ein politisches Schlagwort, sondern wird zum Kaufkriterium bei Kunden der öffentlichen Hand und bei europäischen Großunternehmen. Für Softwareanbieter und Services-Unternehmen bieten sich Chancen durch souveräne, lokal hostbare oder Open-Source-basierte Bereitstellungsmodelle.

Tech Brief #7 | Knappe Rechenkapazität & Versteckte Open-Source-Modelle aus China

OpenAI pausiert die Entwicklung des Video-Generators „Sora“, um knappe Rechenkapazitäten gezielt für den Enterprise-Markt zu priorisieren. Parallel dazu verdeutlicht der Einsatz chinesischer Open-Source-Modelle durch das Startup Cursor die Herausforderungen von sogenannten Wrappern.

I) OpenAI stoppt Sora

OpenAI legt seinen gehypten Video-Generator „Sora“ vorerst auf Eis. Dabei hatte man gerade erst eine Vereinbarung mit Disney über ein 1 Mrd. USD schweres Investment und die Verwendung der IP-Rechte geschlossen, die damit auch hinfällig sein dürfte. Quelle: WSJ

Strategische Einordnung: Zum einen kann dieser Schritt als Teil der aktuellen Refokussierung von OpenAI gesehen werden. Da das Unternehmen im B2B-Rennen um Unternehmenskunden immer stärker gegenüber Anthropic ins Hintertreffen gerät, bündelt man jetzt die Entwicklungsressourcen rigoros auf Angebote für diese Kern-Zielgruppe. Zum anderen zeigt sich hier schonungslos die aktuelle Knappheit an Rechenleistung. Video-Generierung verschlingt Unmengen an Kapazität, die nun konsequent auf die werthaltigsten Anwendungsfälle umgeleitet wird.

II) Cursor nutzt chinesisches Open-Source- Modell

Das hochbewertete Coding-Startup Cursor (aktuelle Bewertung geschätzt zwischen 30 und 50 Mrd. USD) hat sein angeblich proprietäres Modell „Composer 2“ offenbar nicht selbst entwickelt. Recherchen zeigen, dass im Hintergrund stattdessen das günstige chinesische Open-Weights-Modell „Kimi 2.5“ (von Moonshot AI) arbeitet. Cursor hatte diese Tatsache bei der Vermarktung verschwiegen. Quelle: TechCrunch

Strategische Einordnung: Dieser Vorfall macht unmissverständlich klar: Ein Wiederverkäufer (Wrapper) von KI-Leistungen wie Cursor muss auf billige Open-Source-Modelle – die in der Spitze aktuell alle aus China stammen – zurückgreifen, um eine attraktive Marge zu generieren. Für viele Anwenderunternehmen ergibt diese Logik jedoch keinen Sinn. Es ist strategisch weitaus klüger, direkt die leistungsstärksten, in der Entwicklung etwa sechs Monate weiter fortgeschrittenen Frontier-Modelle (im Fall von Coding z.B. Anthropics Claude) einzusetzen. Deren Token-Kosten liegen am Ende ohnehin um den Faktor 100x unter den Kosten eines menschlichen Entwicklers.

Tech Brief #6 | OpenAI rüstet Berater auf & Bezos kauft die Industrie

Um die schleppende KI-Adoption in traditionellen Unternehmen zu durchbrechen, greifen Big-Tech-Akteure zu drastischen Mitteln. OpenAI schmiedet weitreichende Implementierungs-Allianzen mit Tier-1-Beratungen, während Jeff Bezos kurzerhand einen 100 Mrd. USD schweren Roll-up-Fonds plant, um Industrieunternehmen direkt aufzukaufen und selbst zu transformieren.

I) OpenAI’s Allianz mit Tier-1-Beratungen

OpenAI hat unter dem Namen „Frontier Alliances“ Partnerschaften mit den größten Unternehmensberatungen geschlossen – darunter McKinsey, BCG, Accenture und Capgemini. Wie bei den letzte Woche vorgestellten Joint Ventures mit großen Private-Equity-Fonds geht es darum, die Marktdurchdringung in Großkonzernen drastisch zu beschleunigen. OpenAI stellt den Beratungen sogenannte „Forward Deployed Engineers“ zur Verfügung, damit diese agentenbasierte Workflows direkt in die Kernprozesse der Klienten integrieren können. Quelle: BCG Pressemitteilung

Strategische Einordnung: Die anderen Frontier-Labs, allen voran Anthropic, werden hier mit Sicherheit nachziehen. Der direkte Enterprise-Sales ist extrem zäh, da vielen Kunden die interne Kapazität und technologische Expertise für die Implementierung fehlt. Wie jedoch das aktuelle Beispiel des Hacks von McKinseys eigenem KI-Modell „Lilli“ zeigt, ist es mehr als fraglich, ob die großen Strategieberatungen tatsächlich die idealen Partner für die technisch sichere Entwicklung und Skalierung von Enterprise-KI-Lösungen sind.

II) Bezos plant 100-Milliarden KI-Fonds für die Industrie

Der Amazon-Gründer verhandelt aktuell über die Auflegung eines 100 Mrd. USD schweren „Manufacturing Transformation Fund”. Die Strategie: Der Fonds agiert wie ein Private-Equity-Roll-up, kauft etablierte Industrieunternehmen auf und transformiert diese radikal durch KI und Automatisierung. Technologisch soll dies auf Bezos' KI-Venture „Project Prometheus“ basieren, das an der präzisen Simulation und Optimierung physischer Produktionsprozesse arbeitet. Quelle: Wall Street Journal

Strategische Einordnung: Auf der einen Seite könnte man mit einem Augenzwinkern sagen, dass es eine höchst pragmatische Art ist, das Problem der Kundenakquise für Prometheus zu lösen: Man kauft die potenziellen Klienten einfach auf. Auf der anderen Seite zeigt dieser Vorstoß, wie stark ein extrem erfolgreicher Unternehmer und Tech-Insider an das operative Transformationspotenzial von KI in der physischen Wirtschaft glaubt.

Tech Brief #5 | PE-Fonds als KI-Katalysatoren und der McKinsey-Hack

Die operative Realität von Enterprise-KI verändert sich rasant. In diesem Tech Brief analysieren wir, wie Private-Equity-Häuser durch neue Joint Ventures mit Anthropic und OpenAI zum entscheidenden KI-Katalysator für den Mittelstand werden – und warum der erfolgreiche Hackerangriff auf McKinseys KI-Plattform zeigt, dass die Sicherheitsarchitektur der aktuellen Transformation weit hinterherhinkt.

I) Private Equity Partnerschaften als KI-Katalysator

OpenAI und Anthropic verhandeln über Joint Ventures mit führenden Private-Equity-Häusern. Dabei geht es um den Einsatz von “Forward Deployed Engineers” nach dem Vorbild von Palantir, um die KI in den Portfolio-Unternehmen der PEs einzusetzen. OpenAI verhandelt den Berichten zufolge mit TPG, Bain Capital, Advent und Brookfield, während Anthropic an einer Struktur mit Blackstone, Permira und Hellman & Friedman arbeitet. Quelle: Reuters zu Anthropic PE JVs; Reuters zu OpenAI PE JVs

Strategische Einordnung: Ein vielversprechender Ansatz, um die langsame Adoption von KI in traditionellen Unternehmen zu beschleunigen. Dieser Schachzug dürfte mittelfristig auch Wettbewerber der PE-PortCos unter Druck setzen KI stärker als bisher einzusetzen.

II) McKinsey’s Lilli-AI offenbart gravierende Sicherheitslücken

Das Cybersecurity-Startup CodeWall hat in weniger als zwei Stunden die interne generative KI-Plattform „Lilli“ von McKinsey gehackt. Das Resultat: Voller Lese- und Schreibzugriff auf die Produktionsdatenbank sowie auf 46,5 Millionen interne Chat-Nachrichten, 728.000 Dateien und 57.000 Nutzerkonten. Auch die 95 System-Prompts, die das Verhalten und die Leitplanken der KI steuern, lagen offen und waren überschreibbar.

Quelle: CodeWall

Strategische Einordnung: Dieser Vorfall dürfte nachhaltig das Vertrauen von Klienten in die Fähigkeiten der großen Management-Beratungen zur technischen Begleitung der KI-Transformation erschüttern. Absolute Vertraulichkeit und Vertrauen sind die entscheidenden Grundpfeiler des professionellen Wertesystems in der Beratung. Daneben ist es ein weiterer Weckruf, dass die Sicherheitsarchitektur massiv mehr Aufmerksamkeit braucht.

Tech Brief #4 | Anthropic – Explosives Wachstum trifft auf Kriegministerium

Ein massiver Sprung in der Produktnützlichkeit treibt Anthropics Umsatz auf 19 Mrd. USD, doch der Konflikt mit der Trump-Administration eskaliert. Warum die Einstufung als „Supply-Chain-Risk“ zwar ein Risiko darstellt, ein dauerhafter wirtschaftlicher Flurschaden im Vorfeld der Mid-Terms jedoch ein unwahrscheinliches Szenario ist.

I) Anthropic - Aktuell alles explosiv

Die annualisierte Umsatz-Run-Rate ist zuletzt auf rund 19 Mrd. USD sprunghaft gestiegen – verglichen mit ca. 1 Mrd. USD Ende 2024. Gleichzeitig fährt die US-Administration schwere Geschütze auf: Das Pentagon hat das Unternehmen als „Supply-Chain-Risk“ eingestuft und aus Bundesverträgen verbannt, da sich Anthropic weigert, seine Modelle für autonome Waffensysteme und Massenüberwachung freizugeben. Anthropic klagt nun gegen diese Entscheidung. Quelle: Bloomberg

Strategische Einordnung: Das enorme Wachstum ist eine klare Indikation dafür, dass die Produkte – allen voran im Bereich Enterprise und Coding – einen massiven Sprung in der Nützlichkeit für Anwender gemacht haben. Hinsichtlich der Auseinandersetzung mit dem Pentagon tendiere ich zu der Einschätzung, dass bei allem aktuellen Getöse letztlich eine für Anthropic tragbare Lösung gefunden wird. Dafür gibt es handfeste wirtschaftliche Gründe: Zum einen pflegen zentrale Anteilseigner wie Amazon und Google exzellente Beziehungen zur aktuellen US-Administration, zum anderen hängt für praktisch alle Hyperscaler mittlerweile substanzielles Geschäft direkt an Anthropic. Ein harter Bruch wäre für die Wirtschaft und die Märkte extrem negativ. Angesichts der anstehenden Mid-Terms ist es ein höchst unwahrscheinliches Szenario, dass die Trump-Administration einen derart schweren wirtschaftlichen Flurschaden im Tech-Sektor in Kauf nimmt.

Tech Brief #3 | Asymmetrische KI-Bedrohungen, zögerliche Führungskräfte und Sovereign Clouds

Der aktuelle Tech Brief beleuchtet die wachsende Bedrohung durch KI-gestützte Cyberangriffe, die geringe KI-Nutzung und ausbleibenden Produktivitätsgewinne auf Führungsebene sowie Apples Vorstoß für physisch abgetrennte Cloud-Infrastrukturen.

I) Cyber-Attacken erreichen neuen Höchststand; Prompt Injection wird zum Mainstream

Googles Cybersecurity Forecast 2026 zeigt einen generellen Anstieg von Cyberangriffen und prognostiziert, dass KI Social Engineering und die Automatisierung von Attacken im Laufe des Jahres massiv verstärken wird – inklusive eines rasanten Anstiegs von Prompt-Injection-Angriffen auf LLM-Agenten im Unternehmensumfeld. Quelle: Cybersecurity Forecast 2026 (Google)

Strategische Einordnung: Während frühere Betrugsmaschen auf ausgeklügelten E-Mails und SMS basierten, wird die nächste Welle aus ultra-realistischen Telefonaten bestehen. Da zudem immer mehr autonome Agenten im Einsatz sind – oft als unautorisierte „Schatten-KI“ von Mitarbeitern genutzt –, entwickeln sich Prompt Injections (bei denen Angreifer manipulierte Anweisungen in die von den Agenten verarbeiteten Inhalte einschleusen) zu einer geschäftskritischen Bedrohung. Kurz gesagt: Angreifer nutzen KI derzeit deutlich effektiver als die Unternehmen selbst.

II) Führungskräfte nutzen KI kaum; nur 11% sehen bislang einen Produktivitätssprung

Eine Umfrage unter rund 6.000 Führungskräften (USA, UK, DE, AU) ergab: Zwar geben 69% der Unternehmen an, KI zu „nutzen“, doch die tatsächliche Intensität ist extrem gering. Die Nutzung liegt im Schnitt bei nur ca. 1,5 Stunden pro Woche, und 28% der Führungskräfte nutzen KI überhaupt nicht. Wenig überraschend: 89% berichten von keinerlei Produktivitätssteigerung in den letzten drei Jahren. Quelle: ‘Firm Data on AI’ Working Paper des National Bureau of Economic Research

Strategische Einordnung: Diese Daten bestätigen das vorherrschende Bauchgefühl: Es gibt eine breite, oberflächliche Adaption, aber kaum eine echte Neugestaltung der Prozesse. Führungskräfte, die im Alltag nur minimale Berührungspunkte mit KI haben, riskieren, den Reifegrad der Technologie falsch einzuschätzen. Das kann dazu führen, dass sie sowohl den richtigen Zeitpunkt als auch die strategisch entscheidenden Bereiche für einen konsequenten Roll-out verpassen.

III) Apple fordert physisch isolierte Google Cloud für Siri

Apple verhandelt mit Google über das Hosting Siris neuer KI-Fähigkeiten auf der Google-Cloud-Infrastruktur. Um seine strengen Datenschutzversprechen zu bewahren, fordert Apple physische „Co-Location“ – also physisch abgetrennte Server innerhalb der Rechenzentren, auf die selbst Google-Mitarbeiter keinen Zugriff haben. Quelle: The Information

Strategische Einordnung: Dieser Fall zeigt, dass eine strikte Datentrennung auf Hardware-Ebene durchaus verhandelbar ist. Ähnlich wie die Schwarz Gruppe Ende 2024 erfolgreich Sovereign-Cloud-Bedingungen bei GCP durchgesetzt hat, sollten Unternehmen mit wirklich differenzierenden, proprietären Daten die Standardbedingungen der Hyperscaler nicht blind akzeptieren.

Tech Brief #2 | Gegensätzliche KI-Strategien im Fokus

Dieser Tech Brief stellt zwei konträre KI-Ansätze gegenüber: Die Effizienzstrategie von Block versus die Wachstumsstrategie von Duolingo. In einem Marktumfeld, das aktuell zur Defensive neigt, ist es für CEOs entscheidend, sich nicht von kurzfristigen Sentiments leiten zu lassen.

I) Block kündigt 40% Stellenabbau an; Aktie +24%

CEO Jack Dorsey reduziert die Belegschaft um ca. 4.000 Mitarbeitende. Seine Argumentation: Trotz eines robusten Geschäftsmodells haben KI-Tools die Wirtschaftlichkeit des Betriebs verändert und ermöglichen es kleineren Teams, in großem Stil zu skalieren. Die Aktie stieg nach der Ankündigung um über 20%. Quelle: Jack Dorsey / X

Strategische Einordung: Bisher gab es nur wenige Beispiele für derartige Restrukturierungen in der Tech-Branche – z.B. Twitter (ebenfalls von Jack Dorsey gegründet) nach der Übernahme durch Elon Musk oder Klarna. Diese waren jedoch vermutlich eher organisatorischen Ineffizienzen als echten KI-Fortschritten geschuldet. Ich erwarte künftig deutlich mehr dieser substanziellen, KI-getriebenen Reorganisationen, die weit über die üblichen Einsparungen von 10-20% hinausgehen.

II) Duolingo opfert Gewinnwachstum für Produktinvestitionen; Aktie -21%

Luis von Ahn kündigt eine bewusste Verschiebung von kurzfristiger Profitabilität zu Investitionen in einen breiteren Zugang zu Premium-KI-Funktionen an. Dadurch und aufgrund weniger agressiver Monetarisierung soll das Wachstum gesteigert und bis 2028 rund 100 Mio. täglich aktive Nutzer (DAUs) erreicht werden. Die Aktie gab nachbörslich um ca. 21% nach. Quelle: Duolingo Q4/FY25 Shareholder Letter

Strategische Einordung: Dies ist nahezu die gegenteilige Strategie zu Block – aber trotz der unmittelbaren Marktreaktion nicht zwingend die falsche. In einem von Unsicherheit geprägten Umfeld bevorzugen Investoren den „sichereren“ Weg. Dennoch erscheint mir Duolingos Ansatz, massiv in das Nutzerwachstum zu investieren, sinnvoll: i) Es vergrößert ihren „Data Moat“ (den Wettbewerbsvorteil durch proprietäre Daten) und ii) die Nutzerakquise wird künftig nur noch teurer werden.

Fazit

Derzeit werden die Märkte von zwei großen Ängsten getrieben: Erstens, dass sich die enormen KI-Investitionen der Hyperscaler nicht auszahlen. Zweitens, dass KI mittelfristig nahezu alle White-Collar-Jobs ersetzen wird. Beide Sorgen sind übertrieben, üben aber eine enorme Sogwirkung aus. Für CEOs kommt es darauf an, sich für einen klaren Pfad zu entscheiden und das eigene Urteilsvermögen nicht von der aktuellen Marktstimmung trüben zu lassen.

Tech Brief #1 | Desktop-Agenten, Back-Office-KI und Arbeitsintensität

Im Fokus dieses Tech Briefs steht die Evolution autonomer KI – von OpenAIs Desktop-Agenten bis zur Automatisierung hochregulierter Prozesse bei Goldman Sachs. Da KI die Grenzen des Machbaren verschiebt, wird das Management der steigenden Arbeitslast zur zentralen Führungsaufgabe, um die langfristige Leistungsfähigkeit der Teams zu sichern

I) OpenClaw-Entwickler wechselt zu OpenAI

Peter Steinberger schließt sich OpenAI an, um KI-Agenten für jedermann zugänglich zu machen, während sein Projekt OpenClaw in eine Stiftung überführt wird. Quelle: Steinberger’s Blog

Strategische Einordnung: Die führenden KI-Labore werden universelle Agenten nach dem Vorbild von OpenClaw (ausgestattet mit entsprechenden Sicherheitsvorkehrungen) auf den Markt bringen. Diese werden in der Lage sein, am Computer jede Aufgabe auszuführen, die auch ein menschlicher Nutzer erledigen kann.

II) Goldman Sachs entwickelt Agenten mit Anthropic

Über sechs Monate hat ein gemeinsames Team KI-Agenten entwickelt, um volumenstarke Back-Office-Prozesse wie die Handelsabrechnung und die Überprüfung von Kunden (KYC) zu automatisieren. Quelle: CNBC

Strategische Einordnung: KI-Agenten entwickeln sich über die ursprünglichen “Killer-Applikationen” Kundenservice, Content-Erstellung und Programmierung hinaus hin zu komplexen operativen Prozessen. Wenn dies bei einer streng regulierten Bank wie Goldman Sachs funktioniert, wird es in vielen anderen Szenarien ebenfalls anwendbar sein.

III) Studie zu den Auswirkungen von KI auf die Mitarbeiter

Eine Feldstudie bei einem Technologieunternehmen zeigt, dass die Einführung generativer KI die Arbeitsintensität eher erhöhte als verringerte: Mitarbeitende arbeiteten schneller, erweiterten ihren Aufgabenbereich, betrieben mehr Multitasking und erlebten eine zunehmende Entgrenzung der Arbeitszeit. Quelle: HBR

Strategische Einordnung: Eine gesteigerte Selbstwirksamkeit führt zu höherer Motivation (und der Bereitschaft, länger zu arbeiten). Aber: Da der einzelne Mensch so immer mehr zum limitierenden Faktor für das Erreichbare wird, sollte die Prävention von Burnout mehr Gewicht bekommen.

The Renaissance of "Make"

For a decade, the CIO playbook was simple: Buy SaaS and offshore operations to low-cost locations. AI is inverting this logic. Why "Productivity Arbitrage" is replacing labor arbitrage, and why the plummeting cost of code is making custom development attractive again.

How do developments around AI impact the "Make-or-Buy" decisions for software in large enterprises? In the recent past, three major trends have dominated:

1) Onshoring / Offshoring / Shared Service Centers (SSC) to reduce internal costs through scaling and labor cost arbitrage.

2) Rampant tool proliferation, as more and more SaaS tools are purchased directly by the business because no complex system integration is required.

3) Growing dependence on "Systems of Record" (ERP, CRM, etc.), where the company's most critical data is stored.

Here are our first observations on how these trends are currently shifting:

re 1) From Labor Arbitrage to Productivity Arbitrage

AI-based automation is increasingly becoming a relevant alternative to relocating processes to structures with greater economies of scale or lower wage costs. For instance, IBM has replaced more than 90% of its HR functions—previously handled by up to 8,000 employees in SSCs or offshore—with AI agents.

Walmart goes a step further, having built its own AI factory called "Element." Here, AI applications are no longer viewed as individual projects but as products rolling off a standardized production line. The focus is no longer on relocation, but on increasing employee productivity.

re 2) Consolidation and the Renaissance of In-House Development

The decentralized procurement of SaaS tools has led to fragmented data, a lack of control, and high costs. While there have been consolidations within cost-cutting initiatives, most companies still have hundreds of tools in use, and studies estimate 30% to 50% of licenses go unused.

AI offers two levers here: First, it helps make the existing landscape transparent and uncovers consolidation potential. Second, in-house development ("Make") is becoming more attractive for advanced engineering organizations. With every productivity leap in developer tools, the make-or-buy calculation shifts further toward "Make."

re 3) Decoupling instead of Replacing "Systems of Record"

Klarna received a lot of attention in August last year when the CEO's statements were interpreted as if the fintech would replace Salesforce and Workday—at least in large parts—with its own AI solutions. A strategically wise path is often not the complete replacement of "Systems of Record," but decoupling through intelligent abstraction layers. This allows companies to regain a degree of independence by controlling the user interface and application logic themselves.

The Rule of 40 Revisited

In a market oscillating between exuberance and crisis, the Rule of 40 remains the definitive compass for valuation. Analyzing the divergence between listed software companies in DACH and the U.S., and the three strategic imperatives for sustaining a premium multiple.

The Rule of 40 is a widely used heuristic in the software industry that became wildly popular during the last 10 years. It is worth revisiting because it can help companies to balance their ambitions for revenue growth and efficiency.

It says that companies with a combined profitability and growth of more than 40% can achieve much higher valuation levels than companies that don’t. In our analysis, we measure profitability as the Operating Cashflow Margin (TTM) and add the expected revenue growth for 2025.

We analyzed listed software companies headquartered in Germany and Austria, along with U.S. software companies with a market cap exceeding $1B. The sample’s median EV/Sales is 6.1x, and the median Rule of 40 metric is 35%. The 41 companies that achieve >40% command an EV/Sales multiple of 8.6x, compared to 3.8x for the 70 companies below 40%.

While the R² for the Rule of 40’s correlation with EV/Sales has ranged between 0.5 and 0.6 in 2020 / 2021, it currently stands at 0.4. The explanatory power of the metric is meaningful, though there are other variables influencing valuations.

During periods of market exuberance, investors prioritize growth over profitability. For instance, Bessemer determined a relative importance of 6:1 in November 2021. In contrast, during crises, the emphasis shifts towards profitability, though the balance rarely exceeds a 1:1 ratio. This is due to the compounding effect that growth can have on profitability if companies have operational leverage.

The Rule of 40 remains a straightforward yet robust predictor for valuations, offering key implications for strategy and target setting:

I) Software companies should carefully balance growth and efficiency, neglecting either one destroys value no matter which stage the company is in

II) Growth of 10% annually is the bare minimum to command EV/Sales multiples beyond 10x, the target should be in the 15% to 20% range

III) As companies mature, M&A becomes a crucial pillar of strategy to sustain this kind of growth

As always, feel free to reach out to discuss how the Rule of 40 can inform your company’s strategy or valuation goals.

The Logic Behind the 60% Cut

Klarna’s CEO recently set a shock target: reducing the workforce from 5,000 to 2,000 while continuing to grow. Is this just hubris, or calculated strategy? A look at the "triangulation approach" necessary to define realistic yet ambitious AI performance targets.

A month ago, I shared insights on AI strategy and the key building blocks of an AI roadmap. Today, I want to dive deeper into defining a quantitative performance target for AI transformations. To make this concept more concrete, let’s look at the example of Klarna, where CEO Sebastian Siemiatkowski has set a bold and much-discussed objective: reducing the company's workforce from 5,000 to 2,000—around a 60% decrease—in the medium term. This target sparked widespread attention, especially as Klarna, a multi-billion-dollar fintech, is still experiencing rapid growth with no signs of slowing down.

When it comes to setting the right performance target for AI transformations, we typically apply a triangulation approach. I recommend combining three critical components: a top-down benchmarking, a bottom-up workforce analysis, and a validation of the financial implications. Each element offers a unique lens to ensure a holistic target-setting process:

1) Top-down benchmarking provides a clear view of how industry leaders and best-in-class competitors are performing. This serves as a solid foundation to identify where performance improvements can be made.

2) Bottom-up workforce analysis goes function-by-function and job cluster-by-cluster to assess the current potential for automation, as well as opportunities for effectiveness improvements. This analysis effectively defines the upper limit of what AI can achieve for the organization.

3) Finally, analyzing the impact on the financial plan helps determine whether the target is ambitious enough and aligned with the company’s long-term objectives.

These three components are interconnected and collectively allow for a well-rounded determination of the right performance target for an AI transformation.

Of course, the specifics of the approach need to be tailored to each organization’s unique situation. For instance, the workforce analysis must take into account the current level of automation and the complexity of the business. However, I hope this example provides a useful framework to help you think through the challenge of setting effective AI performance targets.

Beyond the Hype: Why "Wait and See" is a Dangerous Strategy for GenAI

As GenAI slides into the "Trough of Disillusionment," skepticism in boardrooms is rising regarding the gap between massive infrastructure spend and actual revenue. However, using current ROI doubts as an excuse to pause is risky. Here is a framework for a pragmatic approach—from FinOps to strategic workforce management—to become AI-ready without burning cash.

GenAI has arrived at the "Peak of Inflated Expectations" on the Gartner Hype Cycle (or has already passed it), and particularly in Germany, one often encounters a certain sense of vindication at the board level: "Good thing we didn't jump on this trend more aggressively. There are hardly any applications that genuinely improve our business today. We will continue to wait until truly value-creating opportunities emerge."

Undoubtedly, the majority of AI investments are currently not profitable. In his widely noted article "AI’s $600B Question" in June, Sequoia’s David Cahn did a rough calculation highlighting the gap between investments in AI infrastructure (primarily for Nvidia GPUs and building the data centers to run them) and the revenue ultimately generated in the AI ecosystem. If one excludes the resale of computing power by hyperscalers (Azure, Google Cloud, AWS, CoreWeave) and focuses on AI services like OpenAI’s ChatGPT or the Copilots from GitHub and Microsoft, global revenue in 2024 will barely exceed $20B. Goldman Sachs discusses similar arguments in the report "GenAI: Too much spend, too little benefit?".

However, waiting is a dangerous strategy, especially in the German economy. This is evident in the transformations within the automotive, chemical, or steel industries. Many business models and industrial logics that have been proven for decades, and are now changing due to decarbonization, cannot be future-proofed by simply swapping individual components one-for-one (e.g., electric instead of combustion drive, hydrogen instead of natural gas/coal). Similarly, no switch can be flipped to make companies "AI-ready" overnight. A programmatic approach should—naturally weighted and tailored to the specific company—take the following elements into account:

1) Performance Improvement // Targeted transformation of "mature" functions In selected areas such as customer service or IT, significant productivity gains can already be achieved today through the use of AI. An example of this is Klarna, which introduced an OpenAI-based AI assistant in the first quarter; in a first step, it took over about one-third of the workload, thereby replacing around 700 FTEs. Another example is Vodafone, with an announced investment of €140m this year alone to revamp its customer service chatbot, Tobi, also using OpenAI’s LLM. Another "mature" function is IT, where we see an average productivity increase of up to 30%, with new software development, in particular, being considerably simplified. Even rapidly growing companies like Meta and Microsoft have released over 15,000 employees each in multiple waves of layoffs over the past three years. In total, technology companies cut over 550,000 jobs globally between 2022 and 2024. While this development is driven by several factors, the significantly increased productivity of senior developers—for whom tools like GitHub Copilot act almost like an 'Iron Man suit'—plays a decisive role.

2) Organizational Capabilities // Broad development of AI skills Even in areas where no value-adding AI solutions are established yet, competence in handling AI should be specifically fostered. The focus here is on experimentation and exploring potential. Only in this way can employees quickly seize opportunities as soon as transformative AI solutions become available. Key success factors include, on the one hand, the establishment of a disciplined, iterative development process that includes reliable (financial) success measurement. This ensures that resources are used efficiently and are aligned with actual needs. On the other hand, building a network of experts and development partners is crucial. This can be supported by replicable methods for partner identification, such as hackathons, as well as a playbook that enables successful collaboration with external developers and solution providers. This creates a solid foundation to quickly identify necessary competencies outside one's own organization and to successfully implement and operate AI use cases. An example of this is S&P Global, which recently commissioned Accenture to conduct systematic GenAI training for all 35,000 employees. In our experience, such programs are most effective when learning and practical application are combined so that employees can develop and implement concrete solutions for the actual challenges in their work environment.

3) Portfolio Strategy // Active risk management and inorganic options for action Analogous to the practices of leading private equity funds and active fund managers, companies should specifically review their portfolios for the opportunities and risks of developments in the field of Artificial Intelligence. In the technology sector, market valuations of AI winners and losers diverged early on. An example of this is Chegg, the US market leader for online tutoring, whose value has dropped by more than 90% since November 2022 because generative AI cannibalized its existing offering. In contrast, Duolingo, the world's leading language learning app, is considered a winner of AI development: Thanks to significant productivity gains in the creation of lessons and software, as well as the introduction of the premium offering Duolingo Max, supported by generative AI, the company was able to significantly increase its earnings potential. In many other areas, the effects of AI are less obvious or will only become visible in the future. Nevertheless, these examples show how crucial it is to engage intensively with the topic in order to manage the portfolio early and actively.

4) FinOps // Basis for economic viability and scalability of AI projects FinOps is far more than just a framework and toolkit for monitoring, measuring, and managing cloud resource consumption. It embodies a cultural shift that focuses on optimizing IT costs across functions and divisions. A robust FinOps practice forms the foundation for developing precise cost attribution, making the specific costs for AI services transparent per team and project. This enables targeted optimizations: For example, for a chatbot that frequently receives similar questions, it could be checked whether results can be cached to provide answers more cost-effectively instead of repeatedly sending API calls to an LLM. Likewise, prompts could be reviewed to determine which Foundation Model can answer the respective request most economically. In this and similar ways, FinOps contributes significantly to the realization of economically viable AI use cases. However, FinOps itself also benefits from advances in AI: Natural Language Processing makes it possible to directly answer questions like "How high will the costs for this workload be?" or "How can the costs of the workload be reduced without compromising performance?". FinOps is thus not only the basis for the economic development of AI applications but also a beneficiary of technological advances that further increase its effectiveness.

5) Strategic Workforce Management // Development paths and involvement of employee representatives With the introduction of artificial intelligence, few roles will disappear completely, but almost all will change. In addition to these changing requirements, the weightings will also shift significantly. This requires more than just a simple "hire and fire." Rather, it requires comprehensive transparency regarding impending changes as well as clearly defined development paths to successfully guide the majority of the workforce through this transition. At the center of the process is the analysis of roles whose importance will decrease in the future, and the comparison of their competency profiles with those of growing roles. For example, "Accountants & Auditors" possess strong mathematical skills and work in a structured and conscientious manner. These characteristics make them excellent candidates for positions such as "Data Scientists" or "Cyber Security Analysts." Based on this, development paths are established that enable the systematic further development of the workforce. Employee representatives and unions should also be involved in this process at an early stage. In our experience, it is crucial to speak openly about a healthy balance between protecting the interests of employees and securing competitiveness through the use of AI. Together, viable models can be defined that do justice to both sides.

Cloud Sovereignty and the GPU Gap

Schwarz Digits reported a remarkable €1.9bn in revenue, proving there is a market for digital sovereignty. But can a local champion compete with the hyperscalers' rich ecosystems and massive AI compute clusters? A look at why Germany might need an "Andreessen Horowitz moment" for infrastructure.

Schwarz Digits – effectively the AWS of the Schwarz Group (Lidl, Kaufland) – reported €1.9 billion in revenue for 2023. That is remarkable and corresponds to roughly 3-6% of the German cloud market just a few years after its founding. The value proposition is "digital sovereignty" and full compliance with German data protection standards.

What are the experiences when migrating workloads to STACKIT? In particular, the mature ecosystems of AWS or Azure are difficult to replicate. What role do these differences play in purchasing decisions and operations?

Another interesting aspect will be the provision of current NVIDIA H100s and subsequent chip generations for training AI models. Meta announced at the beginning of the year that it intends to integrate 350,000 H100s into its data centers by the end of the year. Microsoft (which also provides the infrastructure for OpenAI) and the other hyperscalers will likely build up to a similar extent. At this scale, Schwarz Digits (which already has some A100s and H100s) certainly does not need to invest, partly because no leading foundation models are being trained in Germany. But a smaller cluster would definitely make sense. I see an analogy here to the VC fund Andreessen Horowitz, which makes 20,000 H100s available to its start-ups. German industry and public administration could benefit from a similar initiative, and Schwarz Digits could play a central role in this.

The Perfection Trap

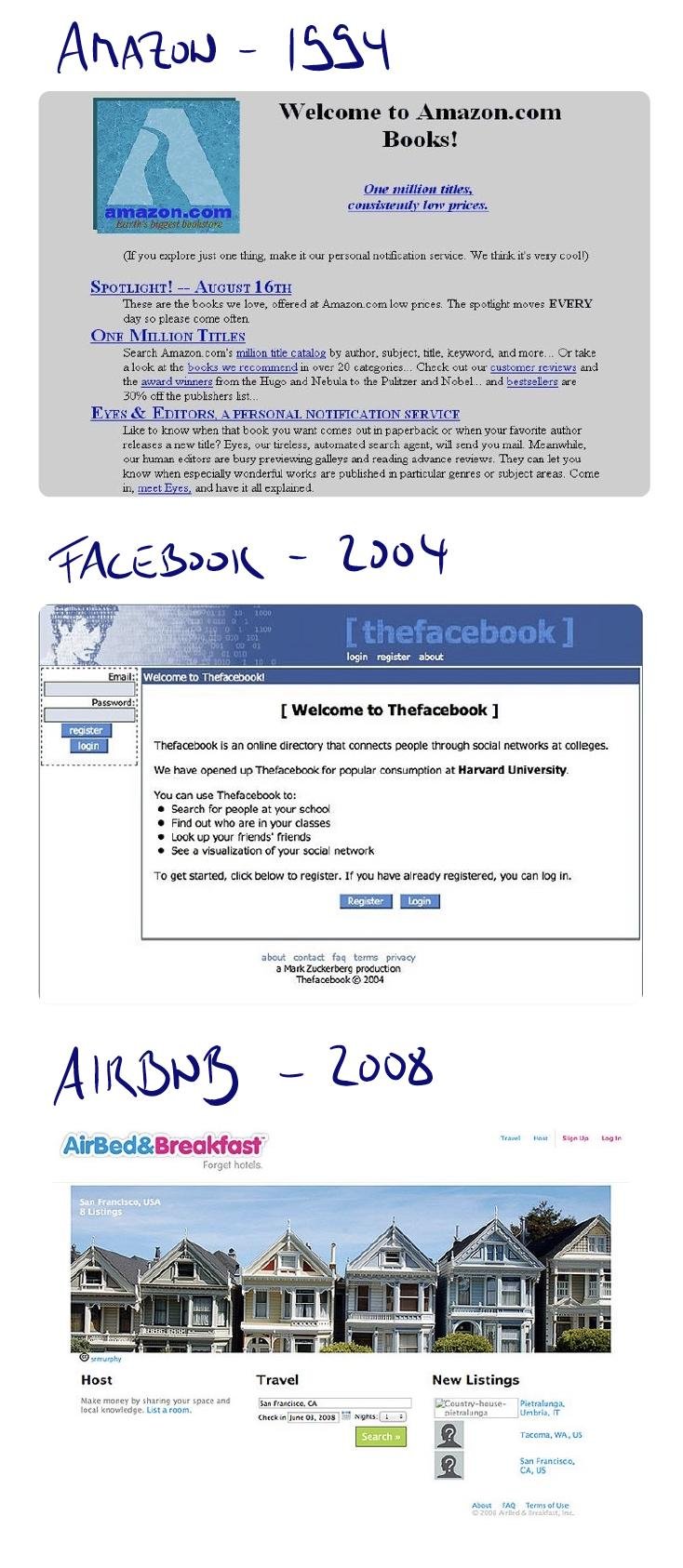

If you are not embarrassed by the first version of your product, you’ve launched too late. Visual evidence of why perfectionism kills innovation.

These screenshots don't fail to bring across the spirit of the MVP (Minimum Viable Product). If you know the concept well, you are probably familiar too with the common resistance among employees. Coming from a culture that does not tolerate failure, they have a hard time internalizing the imperfection that an MVP requires. As LinkedIn co-founder Reid Hoffman famously said: “If you are not embarrassed by the first version of your product, you've launched too late.” In my experience, these images bring across the message better than words.

Operationalizing Culture

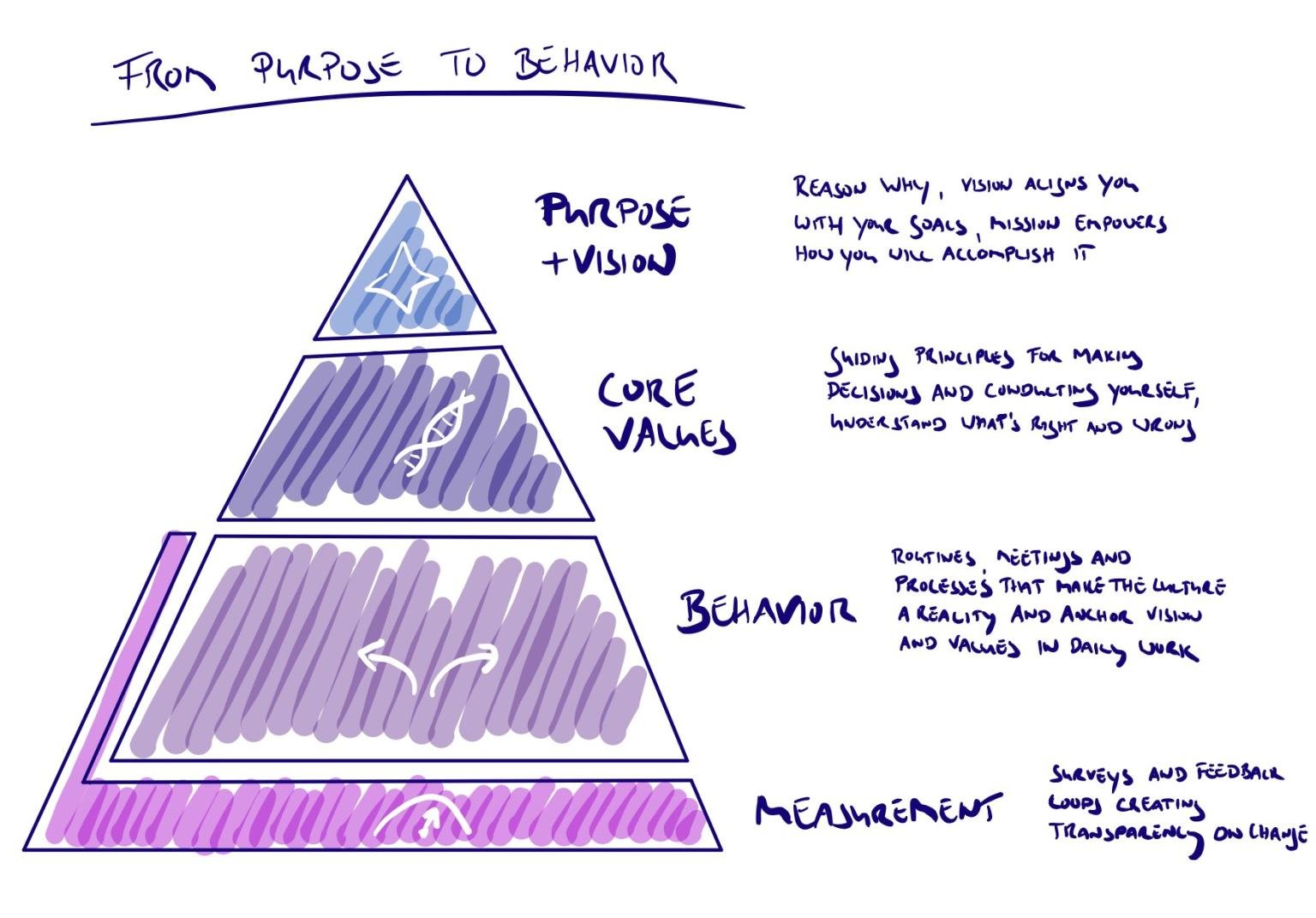

How to turn cultural change into a manageable process. Breaking down the intangible into three actionable areas: leadership alignment, daily ceremonies, and continuous measurement.

Often companies are challenged to change or improve their culture. While everyone knows that culture eats strategy for breakfast, only a few have a clear plan for building a winning culture.

This framework has proven very helpful in operationalizing such a plan. It typically breaks down into three areas of action. Firstly, an exercise with the leadership team to define purpose, vision, and core values. Zappos does this famously well and openly publishes about it. Secondly, the more comprehensive task to determine the ceremonies and rituals that foster vision and values in daily work. This also encompasses the refinement of major existing meetings like all-hands or regular department gatherings to ensure they promote the targeted behavior. Finally, some form of continuous measurement to create a closed feedback loop. There are various great tools out there offering a combination of regular pulse surveys, anonymous feedback, and the support of one-on-ones of people managers.

As always, let me know if you would like to discuss how this applies to your situation.

Overtaking in the Rain

Economic downturns act as a catalyst for disruption. A look at the structural reasons why startups outperformed incumbents during the subprime crisis, and how established organizations can replicate that speed through cross-functional teams and staged funding.

Formula 1 legend Ayrton Senna once said: 'You cannot overtake 15 cars in sunny weather, but you can when it's raining.' These times of COVID-19-induced economic hardship are 'bad weather' only a few were prepared for. The obvious question that is currently grappling everybody's mind is how can we utilize this extremely challenging situation in a positive and value-creating way for our business? What sets the companies which emerge strengthened from such crisis apart from the rest?

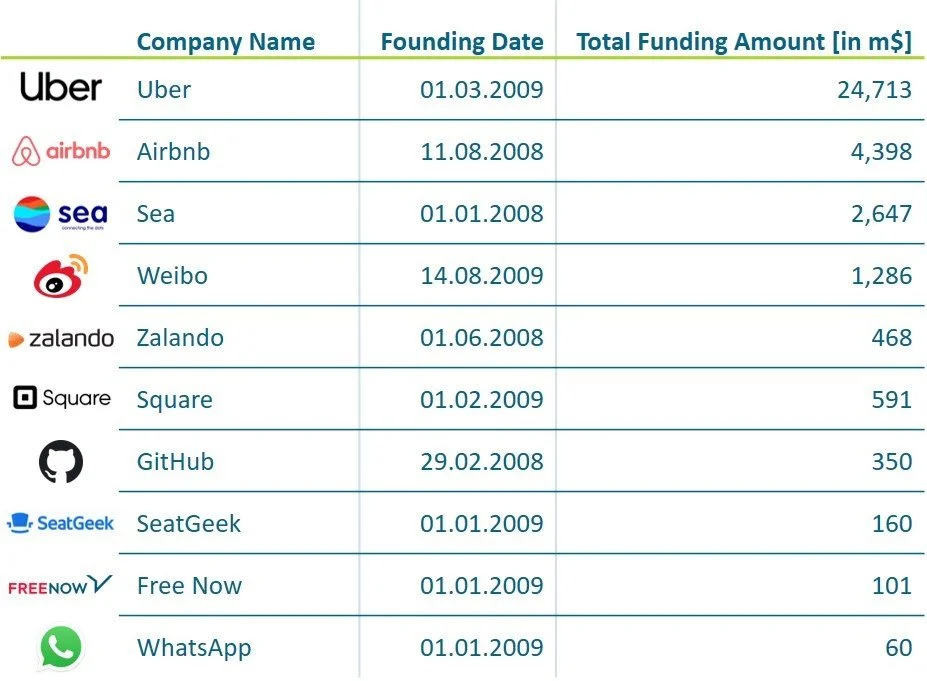

Looking at the most recent crisis - the subprime crisis - reveals a clear pattern: Start-ups fare well in these circumstances. Several companies founded in 2008 and 2009 are among the most successful new businesses of the past decade. These now-famous companies defied the adverse economic conditions and challenged incumbents in their respective markets. Up to this point, they have amassed combined funding of ~35bn USD – equal to the entire GDP of Bahrain or Bolivia.

For many successful start-ups of that era, the crisis was a two-edged sword. The economic recession made it difficult to secure investor funding, and customers' willingness to pay for innovative services dwindled. Nevertheless, the crisis-infused disruption in the market and employment conditions acted as a catalyst for many start-ups.

Take Airbnb and Uber as an example: the large spikes in unemployment and the need to save money at every corner such as transport or holiday provided these two companies with ideal market conditions for their business model. Customers were able to save money compared to traditional competitors' offerings, while drivers and hosts respectively could gain significant additional income. Similarly, WhatsApp enabled people to save money during a time where most people still paid per text message. Hence, these start-ups' success is not just a mere coincidence but a result of them addressing the economic needs elevated by the crisis.

Another critical factor has been the lean start-up approach based on customer focus and iterative, agile product development. It allowed start-ups to mitigate financial bottlenecks and advance their innovations very efficiently. This quality propelled the most successful start-ups on eye-level with or even beyond incumbents within just a decade.

So how can established organizations put on the rain tyres to emerge stronger from the crisis? We have compiled four lessons learned from our work. Apply them to your business like a set of rain tyres to gain traction and speed.

1) Cross-everything teams

Heterogenous, well-balanced teams deliver better results. When team members cover all the steps of the process or value chain, the solution has better chances of working end-to-end.

2) Agile development and design thinking

Beyond the buzz, those working practices centered around the customers and an iterative, hypothesis-, and data-driven project execution deliver a performance increase of at least 40%.

3) Staged funding

Overfunded projects tend never to find product-market-fit. Strictly staged funding linked to objectively measurable goals provides teams with focus. Initial funding should not cover more than three months runway, with periods rising to one year in later stages. Reporting on the operational KPIs should be available weekly.

4) Accountable ownership

One key reason for lean start-ups to thrive is the commitment of the management team. Established organizations cannot match the financial upside nor the daunting threats that venture founders face. But they can increase accountability, for instance by creating ownership in addition to the operational responsibility on a sponsor level and by frequent as well as transparent communications.

Extra tyre: Modern technology stack

A service-oriented architecture with micro-services loosely coupled by APIs is much more flexible than traditional set-ups. Add the partnering capabilities to quickly find the right partner for the development challenge at hand and technology becomes a booster instead of a drag.

This works for initiatives to increase efficiency as well as for new business projects. Look out to leverage your CDO organization or Digital Transformation teams, as they have ample experience with these practices.

Thanks to Marc Buchner and Conrad Bethge who contributed to this article.

The Limits of Robotic Process Automation

Why traditional RPA and ITSM tools often lead to "Ticket Purgatory" rather than autonomy. A case study on moving beyond brittle scripts to Machine Reasoning—using semantic ontologies to achieve a 90% automated resolution rate in complex IT environments.

Is your IT helpdesk drowning in tickets? Does incident handling consume your DevOps teams? Are you struggling with hiring IT specialists and bind them in the long term? Are you grappling with innumerable systems and tools, requiring niche skills and experiences from many years?

You are not alone. One CIO faced backlogs of more than 2,000 tickets, regular maintenance tickets were already ignored, and critical development milestones 1.5 months overdue. The situation further escalated as IFRS related incident and development tickets for specialized finance on-premise applications were piling up. The department concentrated on fast deployments of servers and “quick and dirty” fixes only exacerbating the problems going forward. This is outright ticket hell.

But many more IT departments are at least in what we call ticket purgatory. By harnessing all their strengths, IT tickets are processed within 24h hours. Still, the effort interferes with IT operations, especially in areas of development, maintenance, and IT’s participation in corporate missions, like the development of future business models.

Advanced ITSM tools like ServiceNow and Robotic Process Automation (RPA) do alleviate the problems but are not resolving them. The automation rates remain low because IT environments are mostly too complicated and are changing too fast for fixed automation approaches. In the meanwhile, maintenance requirements for the installed robots increase quickly, and quality issues appear on the horizon. Changed user interfaces after updates, swapped columns in relational databases, unknown error codes, and many other usual IT evolutions cause much trouble to fixed solutions.

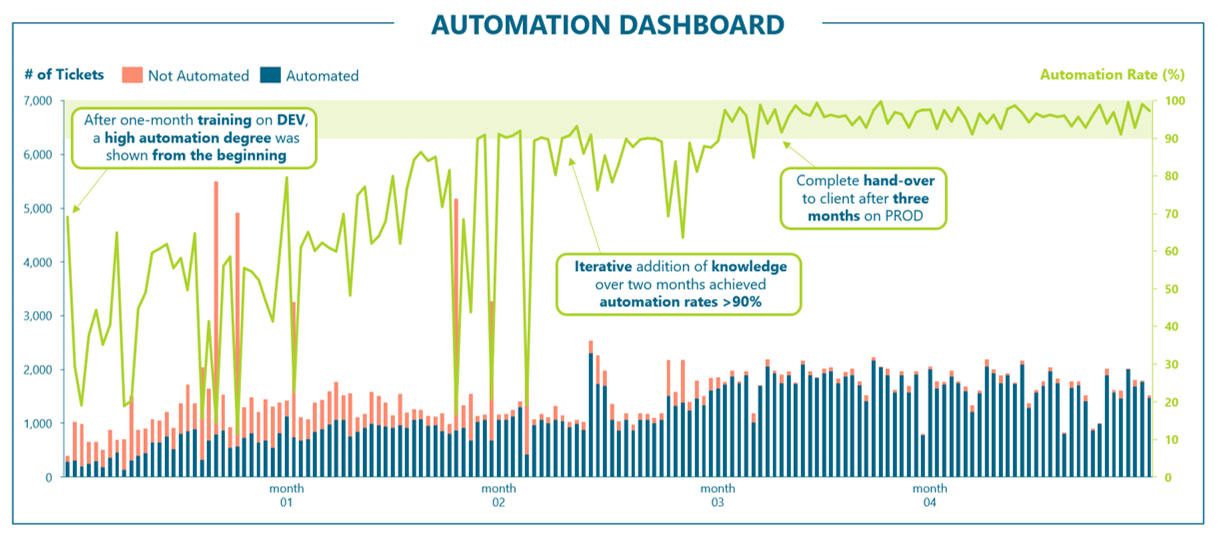

The dashboard shows the impressive automation rate of more than 90% of all incident tickets delivered in just four months. Such results are achieved by applying Machine Reasoning that orchestrates existing ITSM and RPA tools or automates digital processes directly. In a first step, we create a representation of the real world. In this case, we used the MARS ontology (Machine, Application, Resource, Software) for describing the entities and their relationships, and imported the customer’s CMDB. In the second step, we build a knowledge repository based on our library that allows solving many standard tickets. In parallel, we adopt connectors and action handlers to the customer’s systems. Finally, we performed iterative functional testing and integration testing before production go-live after one month. While the average automation rate was already about 50% in the beginning, you can see spikes of manually solved tickets during the first couple of months. To address those ticket types and further increase the automation rate towards the 90% target, we continuously added knowledge items over three months. During this phase, designated members of the client organization were enabled to run the solution. The complete hand-over took place when more than 90% of all tickets were constantly solved automatically.

So what is it for you? Heaven or hell?

Thanks to my co-author Felix Hornung.